Hausmann Study

Contents

A comparison of self-explanation to instructional explanation

Robert Hausmann and Kurt VanLehn

Abstract

This in vivo experiment compared the learning that results from either reading an explanation (instructional explanation) or generating it oneself (self explanation). Students studied a physics example line by line. For half the students, the example lines were incomplete (the explanations connecting some lines are missing) whereas for the other half, the example lines were complete. Crossed with this is an attempted manipulation of the student’s studying strategy. Half the students were instructed and prompted to self-explain the line, while the other half were prompted to paraphrase it, which has been shown in earlier work to suppress self-explanation (click the following link for screen shots from each condition).

Of the four conditions, one condition was key to testing our hypothesis: the condition where students viewed complete examples and were asked to paraphrase them. If the learning gains from this condition had been just as high as those from conditions were self-explanation was encouraged, then instructional explanation would have been just as effective as self-explanation. On the other hand, if the learning gains were just as low as those where instructional explanations were absent and self-explanation was suppressed via paraphrasing, then instructional explanation would have been just as ineffective as no explanation at all.

Preliminary results suggest that the latter case occurred, so instructional explanation were not as effective as self-explanations on tests of basic learning. Analyses are in progress on measures of robust learning.

Background and Significance

Glossary

- Physics example line: lines from an example are organized around sub-goals, and they include a demonstration of an application of a single knowledge component.

- Complete vs. incomplete example: an incomplete example omits the justification for applying a knowledge component, whereas a complete example includes both the application of the knowledge component as well as its justification.

- Instructional explanation: an instruction explanation is generated for a student by an authoritative figure (the textbook, teacher, or researcher). In this research context, the instructional explanation was generated by the experimenters and was delivered to the student in a voice-over narration of an expert solving a problem in Andes.

Research question

How is robust learning affected by self-explanation vs. instructional explanation?

Independent variables

Two variables were crossed:

- Did the example present an explanation with each step or present just the step?

- After each step (and its explanation, if any) was presented, students were prompted to either further explain the step or paraphrase the step in their own words.

The condition where explanations were presented in the example and students were asked to paraphrase them is considered the “instructional explanation” condition. The two conditions where students were asked to self-explain the example lines are considered the “self-explanation” conditions. The remaining condition, where students were asked to paraphrase examples that did not contain explanations, was considered the “no explanation” condition. Note, however, that nothing prevents a student in the paraphrasing condition to self-explain (and vice-versa).

Hypothesis

For these well-prepared students, self-explanation should not be too difficult. That is, the instruction should be below the students’ zone of proximal development. Thus, the learning-by-doing path (self-explanation) should elicit more robust learning than the alternative path (instructional explanation) wherein the student does less work.

As a manipulation check on the utility of the explanations in the complete examples, we hypothesize that the instructional explanation condition should produce more robust learning than the no-explanation condition.

Dependent variables

- Normal Learning

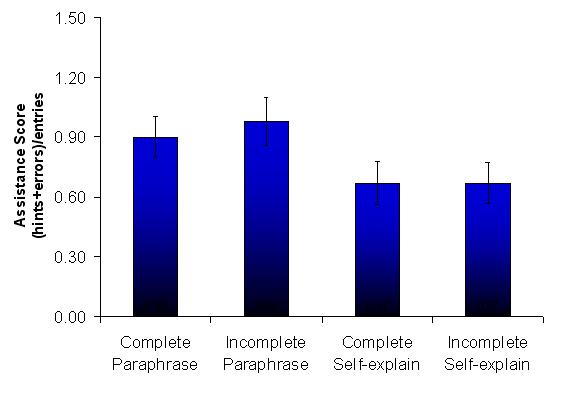

- Near transfer, immediate: During training, examples alternated with problems, and the problems were solved using Andes. Each problem was similar to the example that preceded it, so performance on it is a measure of normal learning (near transfer, immediate testing). The log data were analyzed and assistance scores (sum of errors and help requests, normalized by the number of transactions) were calculated.

- Robust Learning

- Near transfer, retention: On the student’s regular mid-term exam, one problem was similar to the training. Since this exam occurred a week after the training, and the training took place in just under 2 hours, the student’s performance on this problem is considered a test of retention.

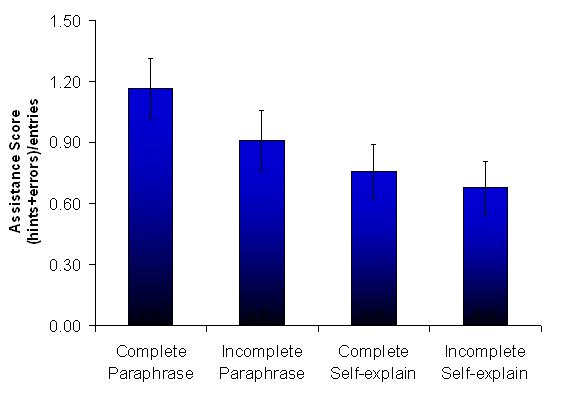

- Near and far transfer: After training, students did their regular homework problems using Andes. Students did them whenever they wanted, but most completed them just before the exam. The homework problems were divided based on similarity to the training problems, and assistance scores were calculated.

- Acceleration of future learning: The training was on electrical fields, and it was followed in the course by a unit on magnetic fields. Log data from the magnetic field homework was analyzed as a measure of acceleration of future learning.

Results

- Normal Learning

- Near transfer, immediate: The self-explanation condition demonstrating lower normalized assistance scores than the paraphrase condition, F(1, 73) = 6.189, p = .015, ηp2 =.078.

- Robust Learning

- Near transfer, retention: Results on the measure were mixed. While there were no reliable main effects or interactions, the complete self-explanation group was marginally higher than the complete paraphrase condition (LSD, p = .064). Moreover, we analyzed the students’ performance on a homework problem that was isomorphic to the chapter exam in that they shared an identical deep structure (i.e., both analyzed the motion of a charged particle moving in two dimensions). The self-explanation had reliably lower normalized assistance scores than the paraphrase condition, F(1, 27) = 4.07, p = .05, ηp2 = .13.

- Near and far transfer:

- Acceleration of future learning: There was no effect for example completeness; however, the self-explanation condition demonstrating lower normalized assistance scores than the paraphrase condition, F(1, 46) = 5.223, p = .027, ηp2 = .102.

Explanation

This study is part of the Interactive Communication cluster, and its hypothesis is a specialization of the IC cluster’s central hypothesis. The IC cluster’s hypothesis is that robust learning occurs when two conditions are met:

- The learning event space should have paths that are mostly learning-by-doing along with alternative paths where a second agent does most of the work. In this study, self-explanation comprises the learning-by-doing path and instructional explanations are ones where another agent (the author of the text) has done most of the work.

- The student takes the learning-by-doing path unless it becomes too difficult. This study tried (successfully, it appears) to control the student’s path choice. It showed that when students take the learning-by-doing path, they learned more than when they took the alternative path.

The IC cluster’s hypothesis actually predicts an attribute-treatment interaction (ATI) here. If some students were under-prepared and thus would find the self-explanation path too difficult, then those students would learn more on the instructional-explanation path. ATI analyzes have not yet been completed.

Annotated bibliography

- Presentation to the PSLC Advisory Board, December, 2006 [1]

- Presentation to the NSF Follow-up Site Visitors, September, 2006

- Preliminary results were presented to the Intelligent Tutoring in Serious Games workshop, Aug. 2006 [2]

- Presentation to the NSF Site Visitors, June, 2006

References

Anzai, Y., & Simon, H. A. (1979). The theory of learning by doing. Psychological Review, 86(2), 124-140.

Chi, M. T. H., Bassok, M., Lewis, M. W., Reimann, P., & Glaser, R. (1989). Self-explanations: How students study and use examples in learning to solve problems. Cognitive Science, 13, 145-182. [3]

Hausmann, R. G. M., & Chi, M. T. H. (2002). Can a computer interface support self-explaining? Cognitive Technology, 7(1), 4-14. [4]

Lovett, M. C. (1992). Learning by problem solving versus by examples: The benefits of generating and receiving information. Proceedings of the Fourteenth Annual Conference of the Cognitive Science Society (pp. 956-961). Hillsdale, NJ: Erlbaum.

Schworm, S., & Renkl, A. (2002). Learning by solved example problems: Instructional explanations reduce self-explanation activity. In W. D. Gray & C. D. Schunn (Eds.), Proceedings of the 24th Annual Conference of the Cognitive Science Society (pp. 816-821). Mahwah, NJ: Erlbaum. [5]

Connections

<insert here>