Difference between revisions of "Intelligent Writing Tutor"

Ruth-Wylie (talk | contribs) (→Abstract) |

Ruth-Wylie (talk | contribs) |

||

| (26 intermediate revisions by the same user not shown) | |||

| Line 4: | Line 4: | ||

| Teruko Mitamura | | Teruko Mitamura | ||

|- | |- | ||

| − | ! Graduate | + | ! Graduate Student |

| Ruth Wylie | | Ruth Wylie | ||

|- | |- | ||

! Faculty | ! Faculty | ||

| − | | Ken Koedinger | + | | Ken Koedinger |

|- | |- | ||

! Others with > 160 hours | ! Others with > 160 hours | ||

| Line 14: | Line 14: | ||

|- | |- | ||

! Start date study 1 | ! Start date study 1 | ||

| − | | | + | | July 2006 |

|- | |- | ||

! End date study 1 | ! End date study 1 | ||

| − | | | + | | September 2006 |

| + | |- | ||

| + | ! Start date study 2 | ||

| + | | September 2007 | ||

| + | |- | ||

| + | ! End date study 2 | ||

| + | | May 2008 | ||

|- | |- | ||

! Learnlab | ! Learnlab | ||

| ESL | | ESL | ||

|- | |- | ||

| − | ! Number of students | + | ! Number of students (completed) |

| − | | | + | | 24 |

|- | |- | ||

! Total Participant Hours | ! Total Participant Hours | ||

| − | | ~ | + | | ~75 hours |

|- | |- | ||

! Datashop? | ! Datashop? | ||

| Line 33: | Line 39: | ||

| − | == | + | == First language effects on second language grammar acquisition == |

Teruko Mitamura, Ruth Wylie, and Jim Rankin | Teruko Mitamura, Ruth Wylie, and Jim Rankin | ||

| − | Project Advisors: | + | Project Advisors: Ken Koedinger |

=== Abstract === | === Abstract === | ||

| Line 45: | Line 51: | ||

=== Glossary === | === Glossary === | ||

| − | L1: a students first language | + | L1: a students first language<br> |

| − | + | Extraneous Cognitive Load: unnecessary cognitive load experienced by learners (Sweller, van Merriënboer, and Paas, 1998)<br> | |

| + | Germane Cognitive Load: the required cognitive load for completing a task (Sweller, van Merriënboer, and Paas, 1998)<br> | ||

=== Background === | === Background === | ||

| − | ==== | + | ==== Learning Science Motivation ==== |

| + | Since instructional time is at a premium, it is important to understand how students learn and retain information in order to design efficient and effective ways to teach them. This work compares the effects of two different tasks on student performance. In one condition, students are asked only to select the correct response (menu-based task). In the second condition, students are asked to correct errors by first detecting the error and then selecting the correct response (controlled-editing task). The trade-off between explicitly teaching both error-detection and production skills versus fully concentrating on production skills remains an empirical question. | ||

| − | + | One thought is that if students are highly skilled in production then they will get error detection for free. For example, if students, while reading a text automatically produce the correct article for each noun phrase, they will be able to recognize errors as those where the text differs from their generated response. However, as many teachers have suggested, perhaps the goal of completely error-free production is unrealistic and students need to have explicit practice both detecting errors and producing the correct response. This is supported by studies that show students who were responsible for producing answers and detecting their mistakes made greater learning gains than students who were responsible for generation alone (Mathan & Koedinger, 2003). | |

==== Educational Motivation ==== | ==== Educational Motivation ==== | ||

| + | One of the main motivations for developing and studying tutors specifically for English article use is that students have great difficulty learning these skills. English Second Language (ESL) instructors report that teaching the English article system is one of their biggest challenges [1]. This was supported in an analysis of student essays collected from the Pittsburgh Science of Learning Center’s English LearnLab during Summer 2005. Error analysis of the essays revealed that article errors represent approximately 15% of all the errors present. However, many English Language teachers are reluctant to spend much time on teaching articles since article errors do not often lead to communication failure (e.g. People generally understand the phrase “Yesterday, I went to store” even though “Yesterday, I went to the store” is correct). However, this is not to say that teachers do not recognize the harmful effects that grammar mistakes have on the credibility of written work (Master, 1997), simply that the ratio of time spent to communication gains does not justify spending large amounts of time on the topic in the typical ESL classroom. Thus, a tutoring system that complements in-class instruction, but which students access in their own time, is likely to be well-received by both over-committed teachers and students looking for additional practice opportunities. | ||

| + | |||

| + | === Research Questions === | ||

| + | How should we design instructional systems to maximize student learning and long-term retention for the English article system? | ||

| − | + | Goals of Study 1 (Summer 2006): Collect performance data for editing and menu interfaces to address questions of (1) which interface do students find more challenging? (2) which knowledge components do students find more challenging? | |

| − | + | Goals of Study 2 (on-going): Collect learning data using the two tutoring systems - (1) does the added difficulty of the editing tutor lead to more robust learning than the simpler menu-based tutor? | |

| − | |||

=== Independent variables === | === Independent variables === | ||

| − | ==== Study | + | ==== Study 2: ==== |

| − | The | + | Students are randomly assigned to be in either high-assistance or low-assistance groups. Students in the high-assistance groups use the menu-based tutors. While students in the low-assistance group use the controlled-editing tutors. |

| − | + | ||

| + | The menu-based tutor, built using the Cognitive Tutoring Authoring Tools (CTAT) (Koedinger, et.al, 2004), is similar to the cloze, or fill-in-the-blank, activities found in many ESL textbooks. Using this interface, students select an article from each drop-down menu in order to complete the paragraph. Students do not need to identify where errors exist; they simply choose a response for each box (Figure 1). | ||

| + | |||

| + | [[Image:SmallMenu.jpg|500px]]<br> | ||

| + | Fig. 1. Menu-based interface for Production Only Task | ||

| + | |||

| + | |||

| + | The hints available while doing this task guide students in the article selection process. When students first for a hint, they are presented the basic dichotomy between the definite (the) and indefinite (a, an, or null) articles. The second hint provides further guidance with respect to the specific noun phrase with which the student is working, while the third, and final, hint gives the student the answer to the problem as well as an explanation of why that answer is correct. For example, given the noun phrase, People all over ___ world, the final hint reads, “Please insert the into the blank. The definite article is used here because there is only one world, so world is a unique noun. Examples of other unique nouns are the moon, the internet, and the sky.” | ||

| + | |||

| + | The controlled-editing tutor is also implemented via CTAT. The controlled-editing tutor allows students to insert, remove, or change articles anywhere in the text. However, only articles can be edited thus preventing students from completing rewriting sentences in order to avoid certain grammar constructions. In this interface, students must both detect the error and produce the correct response (Figure 2). | ||

| + | Students can also ask for hints while using this system. Since students are now asked to identify as well as correct the errors, the first two levels of hints aid in the detection process by narrowing the search space in which students look for an error (e.g. Look for an error in the second sentence.). The subsequent three levels of hints are identical to those hints given in the production-only task. | ||

| + | |||

| + | [[Image:SmallEdit.jpg|500px]]<br> | ||

| + | Fig. 2. Controlled-Editing interface for Detection + Production Task | ||

=== Hypothesis === | === Hypothesis === | ||

| − | ==== Study | + | ==== Study 2: Student Learning ==== |

| − | + | Competing Hypotheses: | |

| − | + | H1: If withholding assistance means that an extraneous process is now required, then learning will be worse. | |

| − | |||

| − | |||

| − | + | H2: If withholding assistance means that an essential process is now required, then learning will be better | |

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

=== Dependent variables === | === Dependent variables === | ||

| − | This study | + | <i>Study 1: Performance Data</i> |

| + | This study used a series of post-tests which measure both normal and robust learning, including: | ||

*[[Normal post-test]], immediate: Immediately following instruction, students will complete their first post-test in order to measure the effectiveness of the training itself. These tasks will be a measure of normal learning (near transfer, immediate testing). | *[[Normal post-test]], immediate: Immediately following instruction, students will complete their first post-test in order to measure the effectiveness of the training itself. These tasks will be a measure of normal learning (near transfer, immediate testing). | ||

*Normal post-test, long-term retention: Additional post-tests will be administered 3, 10, 20, and 35 days after initial instruction. These measures will be similar to the ones students encountered during training but will assess the more robust learning measure of long-term retention. | *Normal post-test, long-term retention: Additional post-tests will be administered 3, 10, 20, and 35 days after initial instruction. These measures will be similar to the ones students encountered during training but will assess the more robust learning measure of long-term retention. | ||

| − | + | <i>Study 2: Learning Data</i> | |

| + | |||

| + | All students will take two post-tests - one in the menu-based format and the other in the controlled-editing format. In this way we can gather both normal learning gains (by comparing pre- and post-test scores on the assessments that matched the tutor interface) as well as transfer (by comparing pre- and post-test scores on the other assessment). See table below. | ||

| + | |||

| + | {| border="3" | ||

| + | ! Tutor Interface!! Normal Assessment !! Transfer Assessment | ||

| + | |- | ||

| + | ! Edit | ||

| + | | Edit || Menu | ||

| + | |- | ||

| + | ! Menu | ||

| + | |Menu | ||

| + | |Edit | ||

| + | |} | ||

=== Explanation === | === Explanation === | ||

| Line 185: | Line 215: | ||

<i>Papers</i> | <i>Papers</i> | ||

| − | Wylie, R. ( | + | Wylie, R., Mitamura, T., Koedinger, K., Rankin, J. (2007) Doing more than Teaching Students: Opportunities for CALL in the Learning Sciences. Proceedings of SLaTE Workshop on Speech and Language Technology in Education. Farmington, Pennsylvania. October 1-3, 2007. |

| + | |||

| + | Wylie, R. (2007) Are we asking the right questions? Understanding which tasks lead to the robust learning of English grammar. Accepted as a Young Researchers Track paper at the 13th International Conference on Artificial Intelligence in Education. Marina del Rey, California. July 9 – 13, 2007 | ||

<i>Presentations and Posters</i> | <i>Presentations and Posters</i> | ||

| − | Computer Assisted Language Instruction Consortium (CALICO) | + | Wylie, R. (2007) Small Words, Big Challenges: Identifying the Difficulties in Learning the English Article System. IES Research Conference. Washington DC. June 7 – 8, 2007. |

| − | + | ||

| − | + | Wylie, R., Mitamura, T., Rankin, J., Koedinger, K. (2007) Developing Tutoring Systems for Classroom and Research Use: A look at two English | |

| + | Article Tutors. Presentation at the Computer Assisted Language Instruction Consortium (CALICO). San Marcos, Teaxas. May 23 – 26, 2007. | ||

| + | |||

| + | Wylie, R., Mitamura, T., Rankin. J. (2006) From Practice to Production: Developing Tutoring Systems for English Article Use. Presentation at the Three Rivers Teachers of English to Speakers of Other Languages (3RTESOL) Conference. Pittsburgh, Pennsylvania. October 28, 2006. | ||

| + | |||

| + | Wylie, R., Mitamura, T., Rankin, J., Koedinger, K., MacWhinney, B. (2006) Developing Intelligent Tutoring Systems for Language Learning. Science of Learning Center Symposium at the Society for Neuroscience conference. Atlanta, Georgia. October 13, 2006. | ||

| + | |||

| + | Wylie, R., Mitamura, T., Rankin, J., Koedinger, K. (2006) Two Tutors, One Goal: Two tutoring systems for teaching English articles. University of Pittsburgh’s Multimedia Showcase. Pittsburgh, Pennsylvania. September 27, 2006. | ||

| − | + | === References === | |

| − | + | Koedinger, K. R., Aleven, V., Heffernan. T., McLaren, B. & Hockenberry, M. (2004). Opening the Door to Non-Programmers: Authoring Intelligent Tutor Behavior by Demonstration. In the Proceedings of 7th Annual Intelligent Tutoring Systems Conference. Maceio, Brazil. Master, P. (1997). The English Article System: Acquisition, Function, and Pedagogy. System. 25,(2) 215-232. | |

| − | |||

| − | + | Mathan, S. & Koedinger, K. R. (2003). Recasting the feedback debate: Benefits of tutoring error detection and correction skills. In U. Hoppe, F. Verdejo, & J. Kay (Eds.), Artificial Intelligence in Education: Shaping the Future of Learning through Intelligent Technologies, Proceedings of AI-ED 2003 (pp. 13-18). Amsterdam, IOS Press. | |

| − | |||

| − | |||

| − | + | Sweller, J., Van Merrienboer, J., & Paas, F. (1998). "Cognitive architecture and instructional design". Educational Psychology Review 10: 251-296. | |

| − | |||

| − | |||

Latest revision as of 02:32, 8 October 2008

| PI | Teruko Mitamura |

|---|---|

| Graduate Student | Ruth Wylie |

| Faculty | Ken Koedinger |

| Others with > 160 hours | Jim Rankin |

| Start date study 1 | July 2006 |

| End date study 1 | September 2006 |

| Start date study 2 | September 2007 |

| End date study 2 | May 2008 |

| Learnlab | ESL |

| Number of students (completed) | 24 |

| Total Participant Hours | ~75 hours |

| Datashop? | Yes |

Contents

First language effects on second language grammar acquisition

Teruko Mitamura, Ruth Wylie, and Jim Rankin Project Advisors: Ken Koedinger

Abstract

In our first study, motivated by both classroom needs and learning science questions, we developed two computer-based systems to help students learn the English article system (a, an, the, null). The first system, a menu-based task, mimics cloze activities found in many ESL textbooks. The second, a controlled-editing task, gives students practice with both detecting errors and producing the correct response. Results from a think-aloud study show significant performance differences between the two tasks. Students find the controlled-editing task more challenging but appear more motivated and engaged when using the system.

The log data from Study 1, along with a Knowledge Component Analysis, has led to a Difficulty Factors Analysis of the domain. In order to determine which article constructions pose the largest challenges to students, a coding scheme was developed and each noun phrase of the target paragraphs was analyzed for both the type of article used (a, an, the, or null) and the reason for its use (e.g. ‘a’ because non-specific, singular, count noun; ‘null’ because non-specific, mass noun; ‘the’ because unique-for-all noun, ‘the’ because unique-by-definition noun; etc.).

Finally, our current work contributes to the Assistance Dilemma. Mainly, when should we provide assistance to students and when should we withhold it? Our planned studies examine whether the added difficulty imposed by the editing tutor is adding extraneous or germane cognitive load.

Glossary

L1: a students first language

Extraneous Cognitive Load: unnecessary cognitive load experienced by learners (Sweller, van Merriënboer, and Paas, 1998)

Germane Cognitive Load: the required cognitive load for completing a task (Sweller, van Merriënboer, and Paas, 1998)

Background

Learning Science Motivation

Since instructional time is at a premium, it is important to understand how students learn and retain information in order to design efficient and effective ways to teach them. This work compares the effects of two different tasks on student performance. In one condition, students are asked only to select the correct response (menu-based task). In the second condition, students are asked to correct errors by first detecting the error and then selecting the correct response (controlled-editing task). The trade-off between explicitly teaching both error-detection and production skills versus fully concentrating on production skills remains an empirical question.

One thought is that if students are highly skilled in production then they will get error detection for free. For example, if students, while reading a text automatically produce the correct article for each noun phrase, they will be able to recognize errors as those where the text differs from their generated response. However, as many teachers have suggested, perhaps the goal of completely error-free production is unrealistic and students need to have explicit practice both detecting errors and producing the correct response. This is supported by studies that show students who were responsible for producing answers and detecting their mistakes made greater learning gains than students who were responsible for generation alone (Mathan & Koedinger, 2003).

Educational Motivation

One of the main motivations for developing and studying tutors specifically for English article use is that students have great difficulty learning these skills. English Second Language (ESL) instructors report that teaching the English article system is one of their biggest challenges [1]. This was supported in an analysis of student essays collected from the Pittsburgh Science of Learning Center’s English LearnLab during Summer 2005. Error analysis of the essays revealed that article errors represent approximately 15% of all the errors present. However, many English Language teachers are reluctant to spend much time on teaching articles since article errors do not often lead to communication failure (e.g. People generally understand the phrase “Yesterday, I went to store” even though “Yesterday, I went to the store” is correct). However, this is not to say that teachers do not recognize the harmful effects that grammar mistakes have on the credibility of written work (Master, 1997), simply that the ratio of time spent to communication gains does not justify spending large amounts of time on the topic in the typical ESL classroom. Thus, a tutoring system that complements in-class instruction, but which students access in their own time, is likely to be well-received by both over-committed teachers and students looking for additional practice opportunities.

Research Questions

How should we design instructional systems to maximize student learning and long-term retention for the English article system?

Goals of Study 1 (Summer 2006): Collect performance data for editing and menu interfaces to address questions of (1) which interface do students find more challenging? (2) which knowledge components do students find more challenging?

Goals of Study 2 (on-going): Collect learning data using the two tutoring systems - (1) does the added difficulty of the editing tutor lead to more robust learning than the simpler menu-based tutor?

Independent variables

Study 2:

Students are randomly assigned to be in either high-assistance or low-assistance groups. Students in the high-assistance groups use the menu-based tutors. While students in the low-assistance group use the controlled-editing tutors.

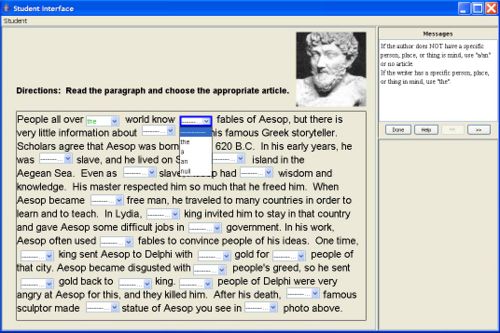

The menu-based tutor, built using the Cognitive Tutoring Authoring Tools (CTAT) (Koedinger, et.al, 2004), is similar to the cloze, or fill-in-the-blank, activities found in many ESL textbooks. Using this interface, students select an article from each drop-down menu in order to complete the paragraph. Students do not need to identify where errors exist; they simply choose a response for each box (Figure 1).

Fig. 1. Menu-based interface for Production Only Task

The hints available while doing this task guide students in the article selection process. When students first for a hint, they are presented the basic dichotomy between the definite (the) and indefinite (a, an, or null) articles. The second hint provides further guidance with respect to the specific noun phrase with which the student is working, while the third, and final, hint gives the student the answer to the problem as well as an explanation of why that answer is correct. For example, given the noun phrase, People all over ___ world, the final hint reads, “Please insert the into the blank. The definite article is used here because there is only one world, so world is a unique noun. Examples of other unique nouns are the moon, the internet, and the sky.”

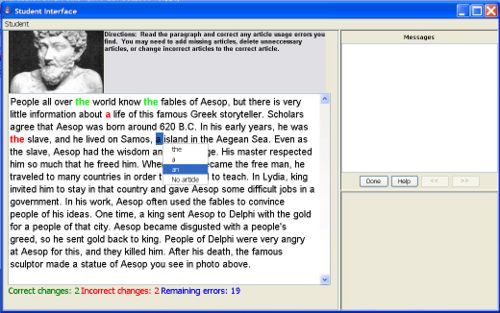

The controlled-editing tutor is also implemented via CTAT. The controlled-editing tutor allows students to insert, remove, or change articles anywhere in the text. However, only articles can be edited thus preventing students from completing rewriting sentences in order to avoid certain grammar constructions. In this interface, students must both detect the error and produce the correct response (Figure 2). Students can also ask for hints while using this system. Since students are now asked to identify as well as correct the errors, the first two levels of hints aid in the detection process by narrowing the search space in which students look for an error (e.g. Look for an error in the second sentence.). The subsequent three levels of hints are identical to those hints given in the production-only task.

Fig. 2. Controlled-Editing interface for Detection + Production Task

Hypothesis

Study 2: Student Learning

Competing Hypotheses:

H1: If withholding assistance means that an extraneous process is now required, then learning will be worse.

H2: If withholding assistance means that an essential process is now required, then learning will be better

Dependent variables

Study 1: Performance Data This study used a series of post-tests which measure both normal and robust learning, including:

- Normal post-test, immediate: Immediately following instruction, students will complete their first post-test in order to measure the effectiveness of the training itself. These tasks will be a measure of normal learning (near transfer, immediate testing).

- Normal post-test, long-term retention: Additional post-tests will be administered 3, 10, 20, and 35 days after initial instruction. These measures will be similar to the ones students encountered during training but will assess the more robust learning measure of long-term retention.

Study 2: Learning Data

All students will take two post-tests - one in the menu-based format and the other in the controlled-editing format. In this way we can gather both normal learning gains (by comparing pre- and post-test scores on the assessments that matched the tutor interface) as well as transfer (by comparing pre- and post-test scores on the other assessment). See table below.

| Tutor Interface | Normal Assessment | Transfer Assessment |

|---|---|---|

| Edit | Edit | Menu |

| Menu | Menu | Edit |

Explanation

This study is part of the Fluency and Refinement cluster. The main hypothesis of this cluster is that the structure of instructional activities and student’s prior knowledge play critical roles in developing robust learning. Since students learning English are already fluent in at least one language, we can utilize this fact to better understand how a student’s prior knowledge affects acquisition of new knowledge components. The learning event space is described as follows:

Start

- Guess

- Entry is correct --> exit, with little learning

- Entry is incorrect --> Start

- Use the article of one’s first language

- Entry is correct --> exit, with possibly mistaken learning

- Entry is incorrect --> Start

- Try to apply knowledge of English article grammar

- Entry is correct --> Exit, with learning

- Entry is incorrect --> Start

The second set of paths (2, 2.1, 2.2) are only available to students whose first language has articles. Thus, this analysis is an instance of the explanation schema adding new paths.

Although this study seems on the surface to be a simple matter of measuring negative transfer, the learning event space analysis suggests that learning is contingent on students’ choices of paths. In particular, if the grammatical system of the first language is quite different from the English system, students may rapidly learn that choice 2 leads only to errors (2.2) so they may stop using their first language as the default solution. In that case, the expected negative transfer may not occur.

The study includes retention and accelerated future learning measures. This allows testing of the path independence hypothesis (Klahr & Nigam; Nokes & Ohlsson), which is that when students reach a certain level of competence, it doesn’t matter how they got there; their subsequent performance, including both retention and acceleration, will be the same. The PSLC theoretical framework suggests that the path to competence does make a difference, albeit a small one. If students make several errors per learning event, and thus have to cycle through the paths above several times, then when they do eventually produce the correct response, the encoding context is cluttered with features due to the errors and feedback messages. During testing, those features will be absent. Thus, students may be less able to retrieve the appropriate knowledge components. This predicts that students who make multiple errors during training, and this is likely to be the students who have path 2 available to them, are likely to have less robust learning.

Findings

Think-Aloud Study

A pilot study was conducted in order to examine differences between the two task types as well as gather verbal protocols in order to understand which rules and heuristics students employ when solving these problems. If students performed equally well (or poorly) using the different interfaces, one would not expect to see differences in learning. In answering these questions, we performed a think-aloud study in which we invited ELL participants into the lab and asked them to complete tasks using the two systems. Students were told beforehand that the only errors present within the paragraphs were article errors.

Task Content

The problem paragraphs came from intermediate and advanced-level ESL textbooks (e.g. [2], [3]). The first problem was shorter in length and used simpler vocabulary than the second problem did. Students were randomly assigned to one of two groups; those in Group 1 completed the intermediate level problem using the editing (production and detection) interface and the advanced level problem with the menu (production-only) interface. Students in Group 2 did the opposite (See Table 1).

Table 1. Type of interface used for each problem

| Intermediate Level Problem | Advanced Level Problem | |

| Group 1 | Production + Detection Task | Production Only Task |

| Group 2 | Production Only Task | Production + Detection Task |

Participants

Participants were recruited from Carnegie Mellon University’s InterCultural Communication Center’s Academic Culture and Communication (ACC) program. The program is a six week summer program designed for newly admitted nonnative English speaking students. Traditionally, students entering the program have high TOEFL scores (greater than 580) and at least an intermediate level of spoken fluency. ACC students are a highly educated and highly motivated group.

In total, there were six participants (three female, three male), and all were native Chinese speakers. The average age was 28 years old and all students had been learning English since middle school (average number of years = 14.4 years). Using self-report scales, participants gave an average proficiency score of 3.4 for reading, 3.0 for writing, and 2.5 for speaking (where 1 represents absolute beginner and 5 native or fluent).

The participants of this study represent a population that is perhaps more advanced than the overall target population of these systems. This is due to the think-aloud methodology employed for the pilot study. We needed students whose English ability was high enough that they were able to verbalize what they were doing. However, because English articles are often one of the last grammar points for students to master, we were able to use texts that were challenging even for these advanced participants.

Results

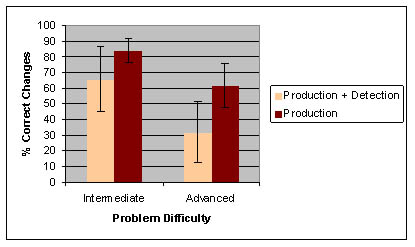

The data reveal a strong performance difference between the two tasks. Again, the difference in the two interfaces was that in the production-only version, students only had to choose which article to insert for each given box. In the production and detection condition, students had to identify and correct the errors. If students were able to correctly locate errors but had difficulty correcting them, we would expect for the results to be the same regardless of the interface. However, if error identification is the true obstacle, we would expect students in the detection and correction condition to perform worse than students using the production-only tutor.

For both problems, the production-only task resulted in higher accuracy, as measured by the percent of necessary changes that were correctly made. While the difference between the two interfaces was not significantly different for the easier, intermediate-level problem (t=1.45, p = 0.13), the performance results were significantly different for the advanced problem (t=2.13, p = 0.05), suggesting that when students are presented with level-appropriate texts, detecting errors is a formidable challenge.

Further Information

Proposed Future Work Proposed future work for the IWT project include conducting a controlled in vivo experiment in which we answer the following question - How does scaffolding and feedback timing during learning affect transfer to authentic production?

During the unit on articles, students will be assigned to one of four conditions (See Table 2). In addition to studying the main effects of the manipulation, we will look for interactions between condition and student level (intermediate vs. advanced).

Table 2. Proposed 2x2 design

| Menu-Based Tutor | Controlled-Editing Tutor | |

| Immediate Feedback | Group 1 | Group 2 |

| Delayed Feedback | Group 3 | Group 4 |

Menu-Based Tutor

The menu-based tutor, built using CTAT, is similar to the cloze activities found in many English textbooks. Using this interface, students select an article from the drop-down menus in order to complete the paragraph. Students do not have to identify where the errors exists but must produce the correct answer for each box.

Controlled-Editing Tutor

The controlled-editing tutor is also implemented via CTAT through the introduction of a widget developed this year. The controlled-editing tutor allows students to insert, remove or change articles anywhere in the text. However, students can only edit articles thus preventing students from completing rewriting sentences in order to avoid certain grammar constructions. In this interface, students must first identify and then fix the errors in the paragraph.

Feedback Conditions

In the immediate feedback condition, student edits turn green if they are correct and red if they are incorrect. In the delayed feedback condition, all edits turn blue until the student states that he is finished working with the paragraph. At this time, the edits are graded and labeled correct (green) or incorrect (red). Students are required to fix all the incorrect edits before moving to the next problem.

Expected Contributions In addition to the software contributions of developing two article tutors, the study will provide a rigorous evaluation of the role of feedback and scaffolding for language learning and production. The practical implications of this work include a better understanding of which instructional techniques lead to robust learning of article use. This work will widen the scope of previous learning science research by introducing the language learning domain to the debates of when to present feedback and the risks/benefits of scaffolding during learning.

Papers and Presentations

Papers

Wylie, R., Mitamura, T., Koedinger, K., Rankin, J. (2007) Doing more than Teaching Students: Opportunities for CALL in the Learning Sciences. Proceedings of SLaTE Workshop on Speech and Language Technology in Education. Farmington, Pennsylvania. October 1-3, 2007.

Wylie, R. (2007) Are we asking the right questions? Understanding which tasks lead to the robust learning of English grammar. Accepted as a Young Researchers Track paper at the 13th International Conference on Artificial Intelligence in Education. Marina del Rey, California. July 9 – 13, 2007

Presentations and Posters

Wylie, R. (2007) Small Words, Big Challenges: Identifying the Difficulties in Learning the English Article System. IES Research Conference. Washington DC. June 7 – 8, 2007.

Wylie, R., Mitamura, T., Rankin, J., Koedinger, K. (2007) Developing Tutoring Systems for Classroom and Research Use: A look at two English Article Tutors. Presentation at the Computer Assisted Language Instruction Consortium (CALICO). San Marcos, Teaxas. May 23 – 26, 2007.

Wylie, R., Mitamura, T., Rankin. J. (2006) From Practice to Production: Developing Tutoring Systems for English Article Use. Presentation at the Three Rivers Teachers of English to Speakers of Other Languages (3RTESOL) Conference. Pittsburgh, Pennsylvania. October 28, 2006.

Wylie, R., Mitamura, T., Rankin, J., Koedinger, K., MacWhinney, B. (2006) Developing Intelligent Tutoring Systems for Language Learning. Science of Learning Center Symposium at the Society for Neuroscience conference. Atlanta, Georgia. October 13, 2006.

Wylie, R., Mitamura, T., Rankin, J., Koedinger, K. (2006) Two Tutors, One Goal: Two tutoring systems for teaching English articles. University of Pittsburgh’s Multimedia Showcase. Pittsburgh, Pennsylvania. September 27, 2006.

References

Koedinger, K. R., Aleven, V., Heffernan. T., McLaren, B. & Hockenberry, M. (2004). Opening the Door to Non-Programmers: Authoring Intelligent Tutor Behavior by Demonstration. In the Proceedings of 7th Annual Intelligent Tutoring Systems Conference. Maceio, Brazil. Master, P. (1997). The English Article System: Acquisition, Function, and Pedagogy. System. 25,(2) 215-232.

Mathan, S. & Koedinger, K. R. (2003). Recasting the feedback debate: Benefits of tutoring error detection and correction skills. In U. Hoppe, F. Verdejo, & J. Kay (Eds.), Artificial Intelligence in Education: Shaping the Future of Learning through Intelligent Technologies, Proceedings of AI-ED 2003 (pp. 13-18). Amsterdam, IOS Press.

Sweller, J., Van Merrienboer, J., & Paas, F. (1998). "Cognitive architecture and instructional design". Educational Psychology Review 10: 251-296.